How to design, ship, and scale fleet OEM connectivity

Shipping a connected device is straightforward. Shipping a hundred thousand of them – across dozens of countries, with different operators, evolving regulations, and firmware that needs updating in the field – is an entirely different engineering problem.

Most OEM connectivity failures aren't caused by bad hardware. They're caused by architectural decisions made too early, at too small a scale, without accounting for what happens when the fleet is live and growing.

In this article

- The architectural foundation: layers that have to be right

- eSIM and profile management at scale

- Module selection and antenna design

- The six failure modes that will find you

- Fleet monitoring: KPIs that matter

- Frequently asked questions

- Key takeaways

The architectural foundation: layers that have to be right

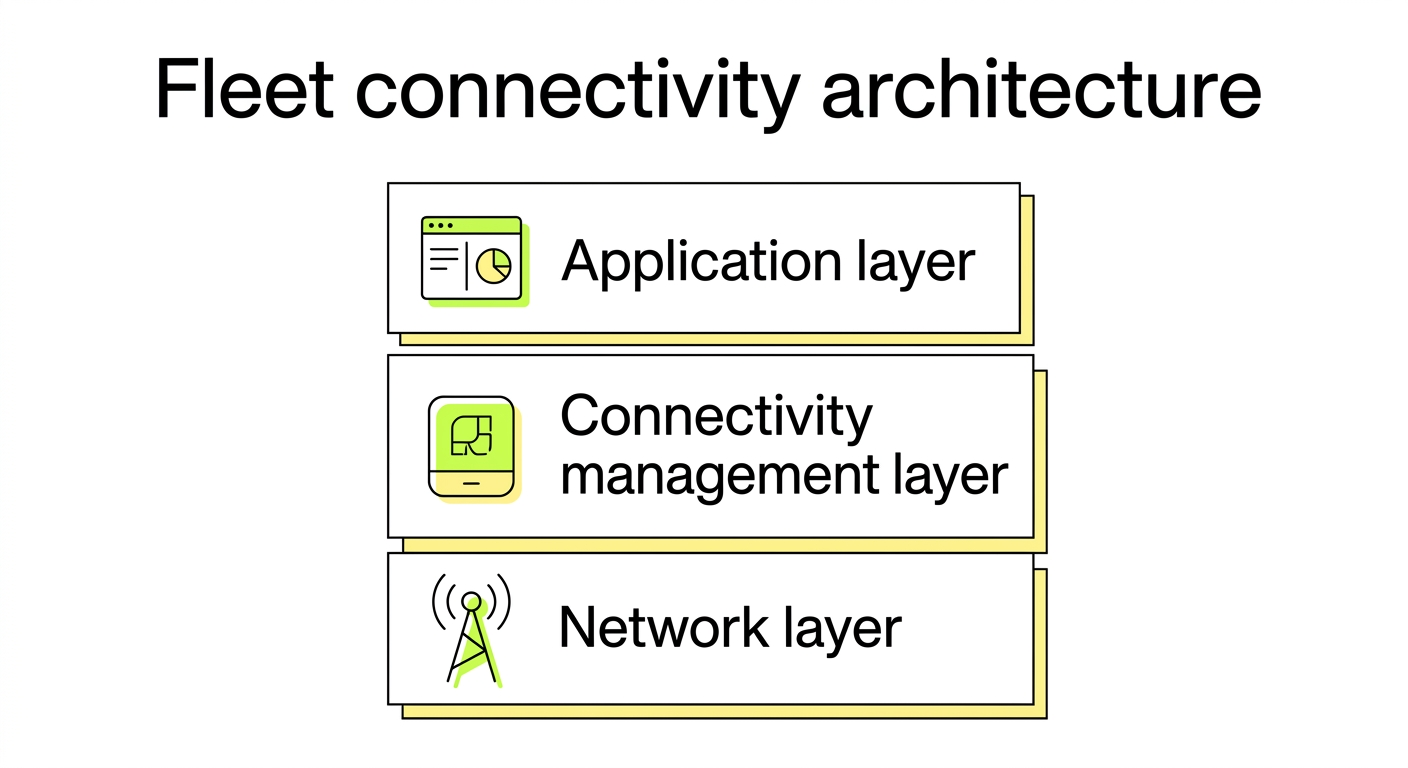

ETSI TR 103 527 recommends a layered, virtualized architecture for massive IoT deployments – separating network, endpoint, and service tiers to isolate faults and scale independently. This isn't theoretical. It's what distinguishes a fleet that can absorb a regional operator outage from one that goes dark.

Three layers determine whether your architecture holds up at scale:

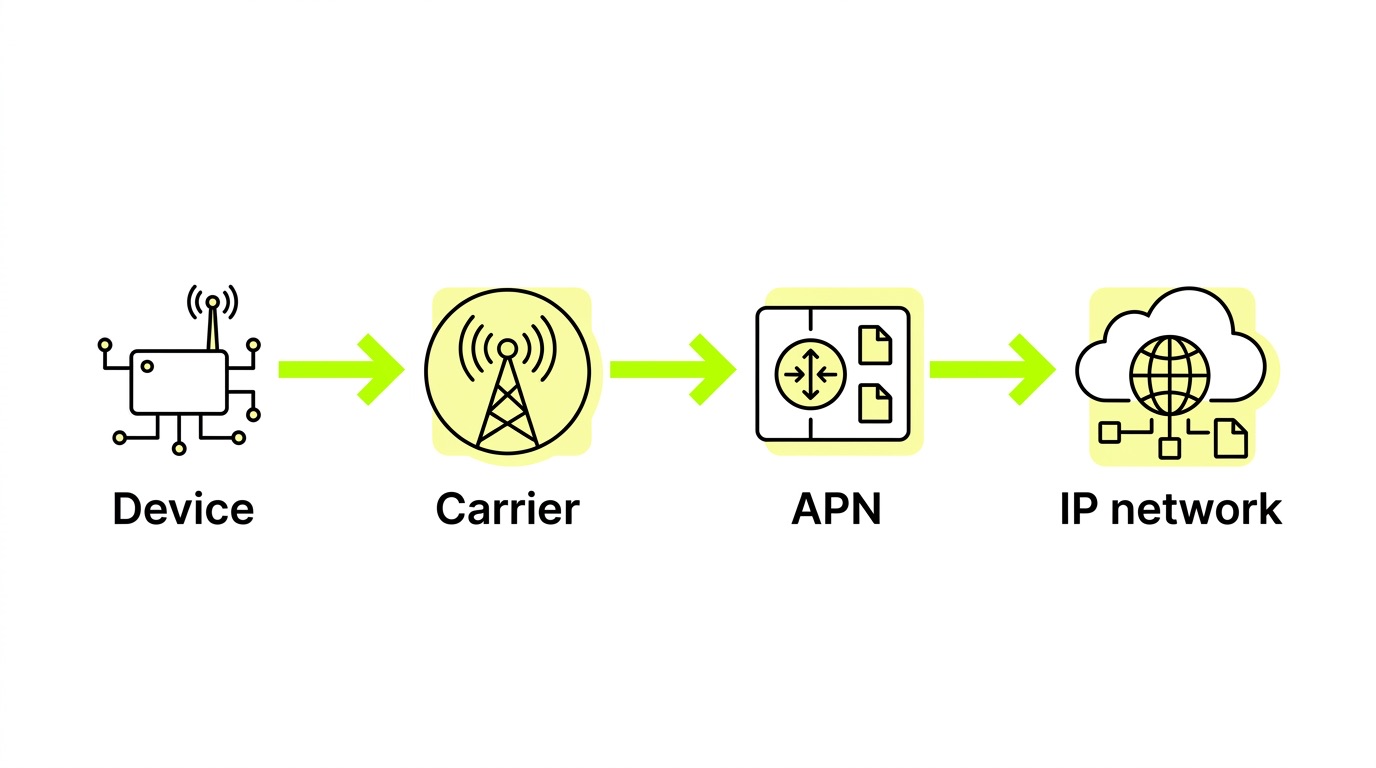

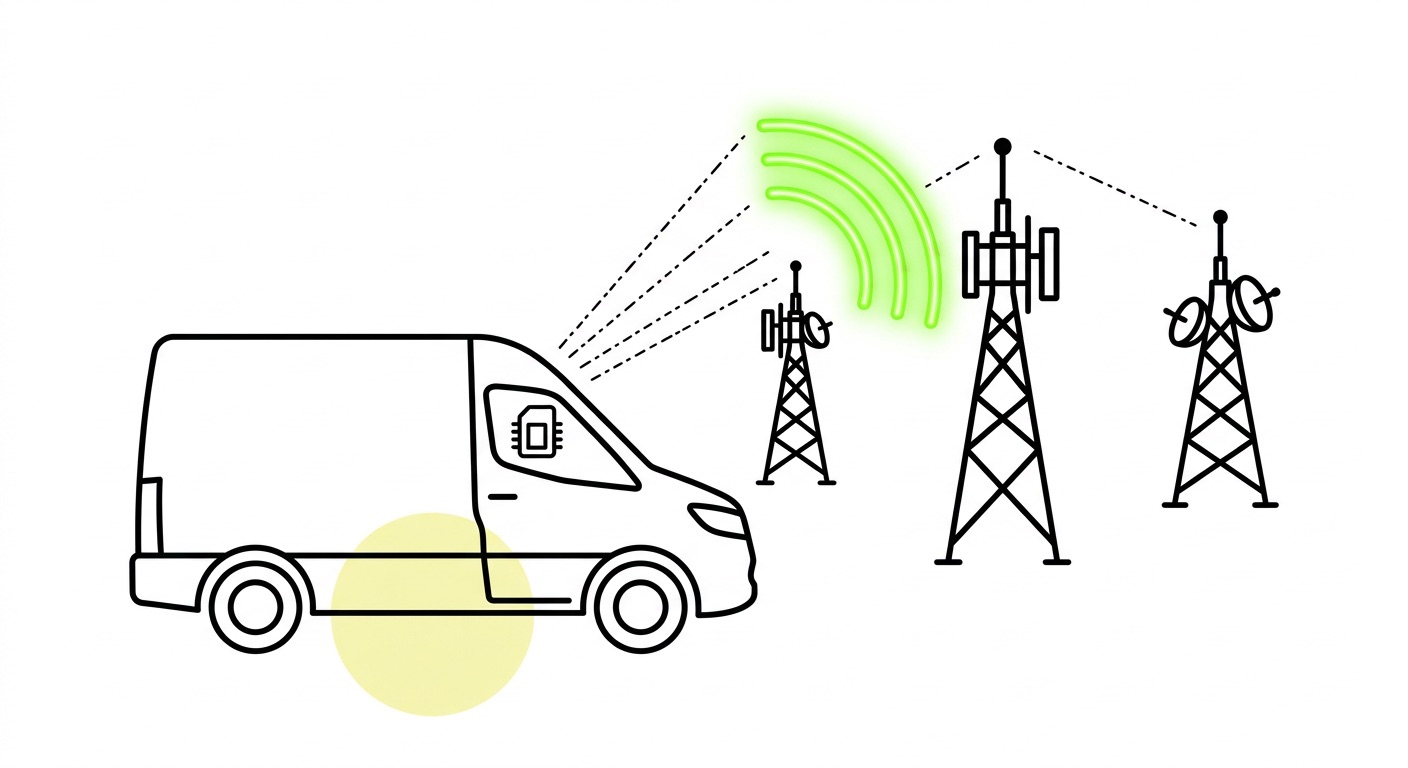

- Network layer: The cellular infrastructure itself – radio access, core network, roaming agreements. Your SIM or eSIM profile determines which operators a device can register on. Multi-network access at this layer means your device isn't hostage to a single carrier's uptime.

- Connectivity management layer: The platform that governs SIM state, data session visibility, billing, and alerts. This is where most OEMs underinvest – and where most fleet-level failures become visible too late.

- Application layer: Your telematics, diagnostics, or data application sitting above the session. This layer can only be reliable if the two below it are solid.

What makes cloud-native architecture the right choice for OEM fleets isn't just scalability – it's geo-redundancy and automatic failover. On-premise SIM management infrastructure hits physical limits quickly and creates single points of failure that cloud-hosted platforms avoid by design. We run our RSP infrastructure on AWS precisely because it lets us serve multi-million SIM deployments with the availability that fleet operations demand – a setup we've documented in detail together with AWS.

The deeper principle: don't architect for your current fleet size. Architect for what happens when it's ten times larger, operating in new markets, with network conditions you haven't encountered yet.

eSIM and profile management at scale

eSIM is the most consequential architectural decision you'll make for a global fleet – not because it eliminates SIM logistics (though it does), but because it changes what's possible once devices are deployed.

The relevant standards for OEM fleets are SGP.22 (M2M eSIM, defining SM-DP+ and SM-SR) and the newer SGP.32 (IoT eSIM, introducing the eIM – eSIM IoT Remote Manager). Understanding the distinction matters in practice:

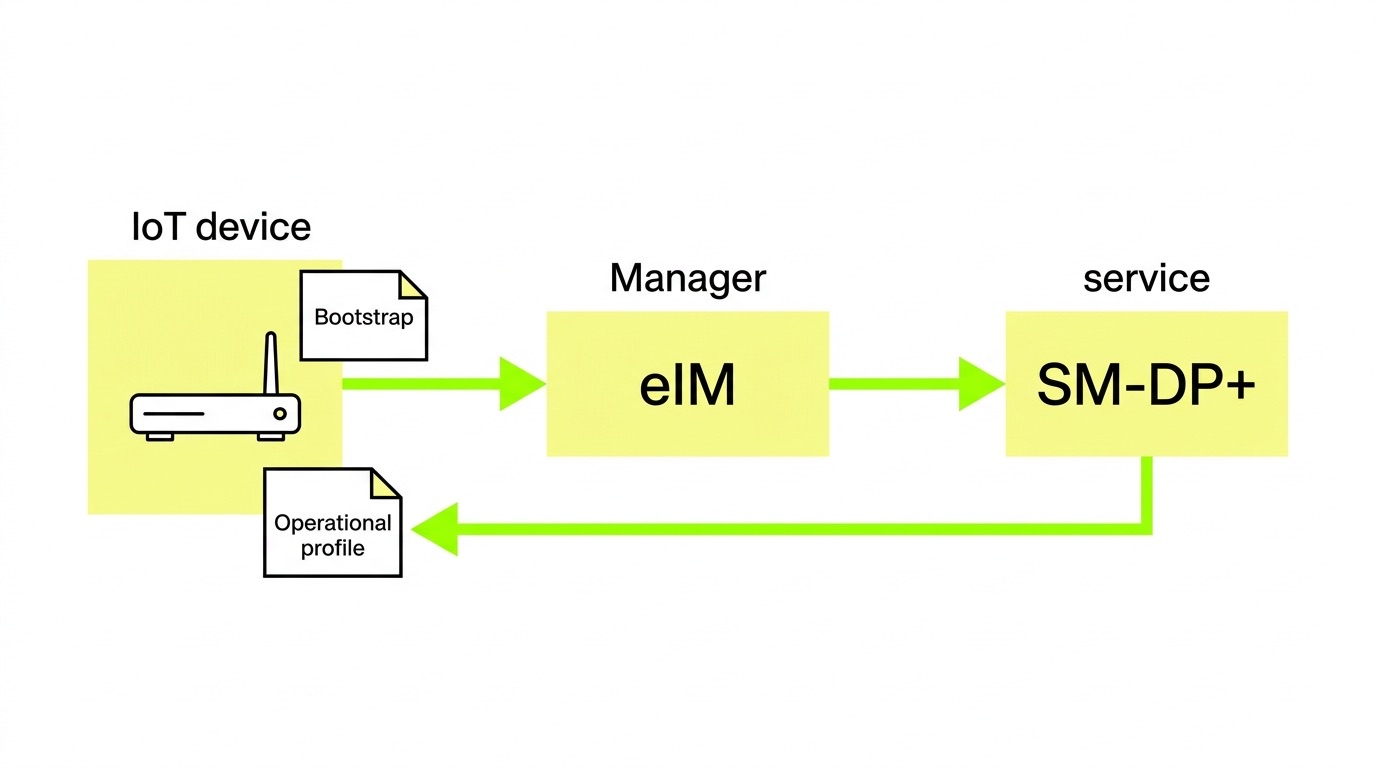

- SGP.22 / SM-DP+ handles profile hosting, provisioning, and lifecycle management. The SM-DP+ is the server-side infrastructure that holds operator profiles and pushes them to devices. This is the mature, commercially deployed standard that most M2M deployments run on today.

- SGP.32 / eIM is the newer IoT-specific standard that removes the SM-SR vendor lock-in present in SGP.22, enables profile downloads without SMS (critical for NB-IoT devices), and supports multiple transport protocols – HTTP/TCP/TLS for broadband devices, CoAP/UDP/DTLS for constrained ones. It also introduces device-side profile initiation, which matters for remote or intermittently connected fleets. Our introduction to SGP.31/32 covers the architecture in more depth.

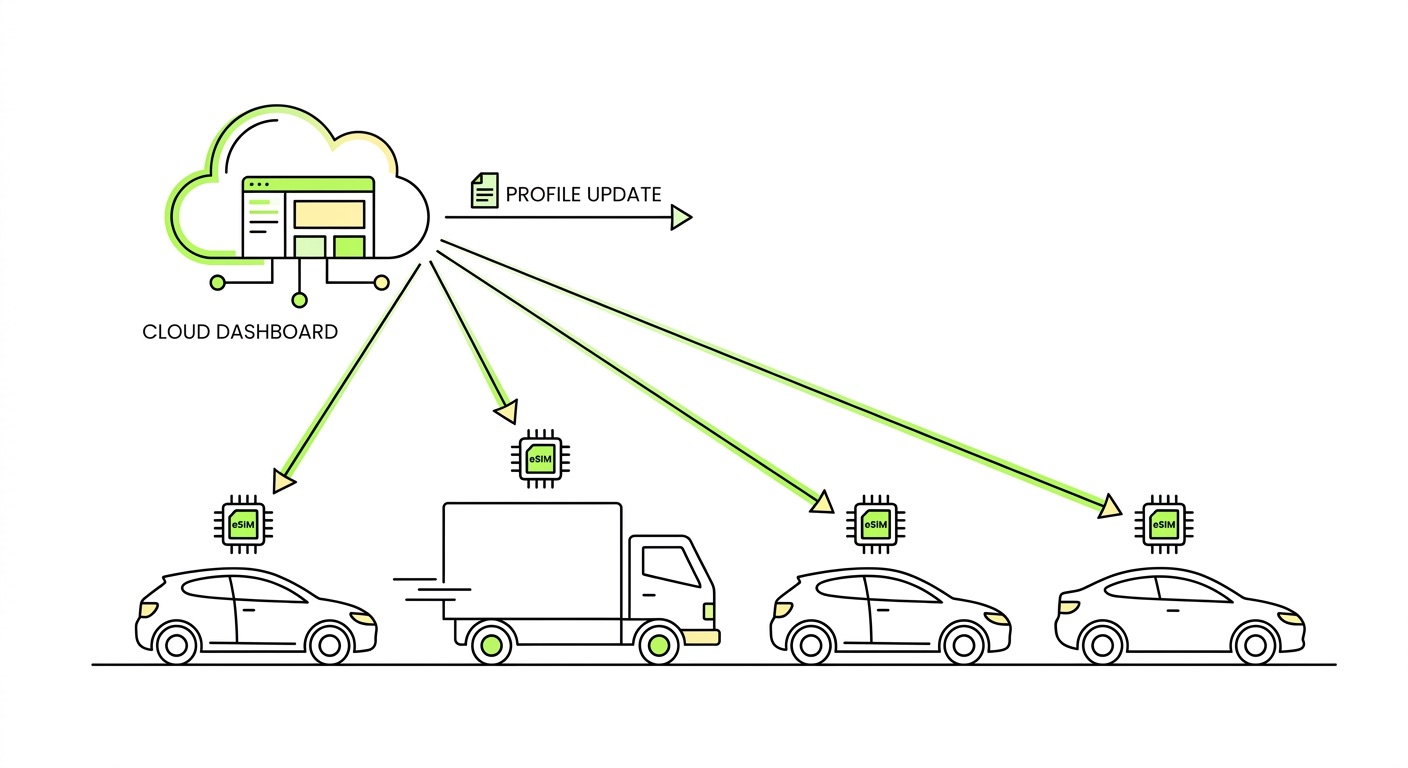

The bootstrap profile approach is the standard deployment pattern for global OEM fleets: devices ship with a generic initial profile that provides basic connectivity, then download operational profiles for specific regions or operators after deployment. This decouples SKU management from regional operator decisions – one hardware variant, globally deployable, with carrier selection happening in software.

In practice, this enables three things that matter operationally. First, if an operator sunsets their 2G/3G network or degrades service quality, you push a profile update over the air – no field recalls required. Second, multi-network eSIMs provide failover within individual countries, not just across borders: if one network is congested or down, the device switches. That's a reliability architecture, not just a coverage feature. Third, local profiles downloadable via eSIM resolve permanent roaming restrictions without physical SIM logistics – a growing concern as more markets tighten rules. Our guide to navigating roaming restrictions covers the regional specifics.

The operational point that gets missed: profile portability only delivers value if your connectivity management platform can follow the switch – updating state, tracking the new session, maintaining billing continuity. Profile switching and operational visibility have to be architected together, not bolted on separately.

Module selection and antenna design

Hardware choices derail more "global" fleet plans than connectivity does. We've seen this go wrong repeatedly – OEMs locking in a module based on unit cost, only to discover it lacks BIP support for eSIM profile management, or uses a radio technology that's being sunsetted in key markets.

Radio technology selection is where the most consequential tradeoffs live:

- CAT-1 bis is the default recommendation for moderate-data fleet applications – telematics, diagnostics, GPS. Single antenna, cost-effective, forward-compatible with 4G/5G networks, and supports eDRX and PSM for power efficiency where needed.

- LTE-M (CAT-M1) is mandatory for vehicle fleets. It supports cell handover – a feature NB-IoT fundamentally lacks – making it one of the viable options for moving assets. If your device moves, this is not a tradeoff.

- NB-IoT should be reserved for stationary, low-data, low-power assets only. It does not support handover and will silently fail in mobile applications.

- 5G is appropriate for high-throughput applications such as HD video or dense sensor arrays. It adds cost and complexity; only specify it when the use case genuinely requires it.

For established module vendors – Quectel, u-blox, Telit, SIMCom – firmware support track record matters as much as spec-sheet capabilities. A module with strong firmware support can receive critical patches over its deployment lifetime. One without it becomes a long-term liability.

Antenna design is where bench testing routinely fails OEM teams. Optimize for low-band frequencies (700/900 MHz) for indoor and deep-coverage penetration – this matters more than peak throughput for most fleet applications. Enclosure detuning is real and frequently underestimated: an antenna that performs well on a bench may perform poorly once installed in a vehicle chassis or equipment housing. Test under realistic installation conditions, not just controlled lab environments. CAT-1 bis uses a single antenna, which simplifies integration and reduces enclosure design complexity compared to MIMO configurations.

BIP (Bearer Independent Protocol) support in the cellular module is a hard requirement for M2M eSIM remote profile management. If your module shortlist doesn't confirm BIP support, resolve that before committing to hardware – retrofitting is not an option.

The six failure modes that will find you

We've covered these in detail in our guide to common connectivity failures in fleet deployments, but for an architectural audience the framing is different: these aren't just operational problems – they're design decisions made, or skipped, before deployment.

The APN issue deserves particular emphasis: it is the most common and most preventable fleet connectivity failure. A device can successfully register on the radio layer – showing signal, appearing connected – and still fail to establish a data session if the APN configuration is wrong. A device that appears connected but isn't transmitting is harder to diagnose than one that's simply offline. Validate APN strings, authentication type (PAP vs. CHAP), and credentials per deployment region before you scale. However, 1oT has a single APN solution; therefore, you won't need to go through all this hassle, and you just need to insert the APN once.

The signaling storm problem is firmware-level and cannot be retrofitted after deployment. Exponential backoff with a maximum retry ceiling needs to be in the firmware before production. This is an architectural decision with a hard deadline: it has to happen before units leave the factory.

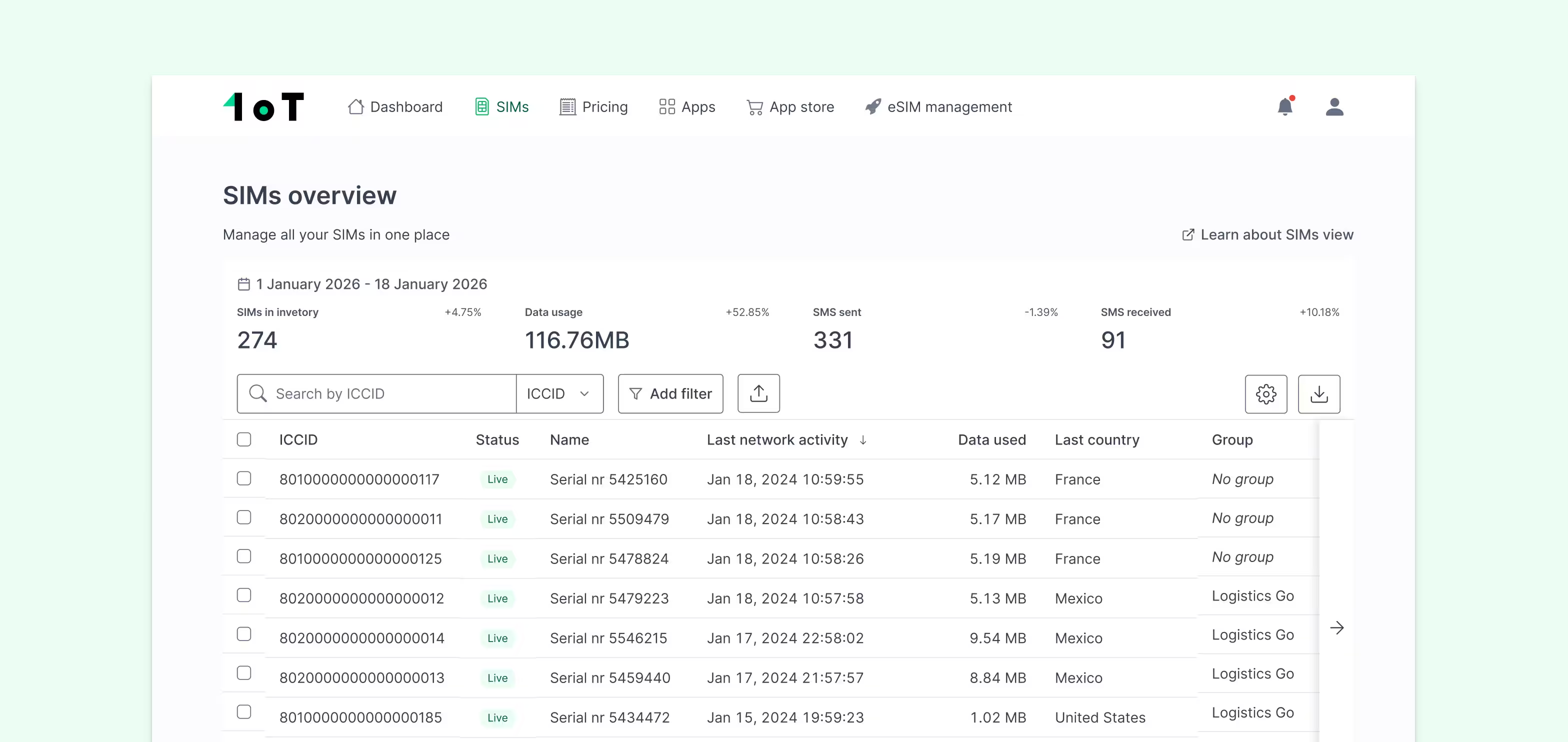

Fleet monitoring: KPIs that matter

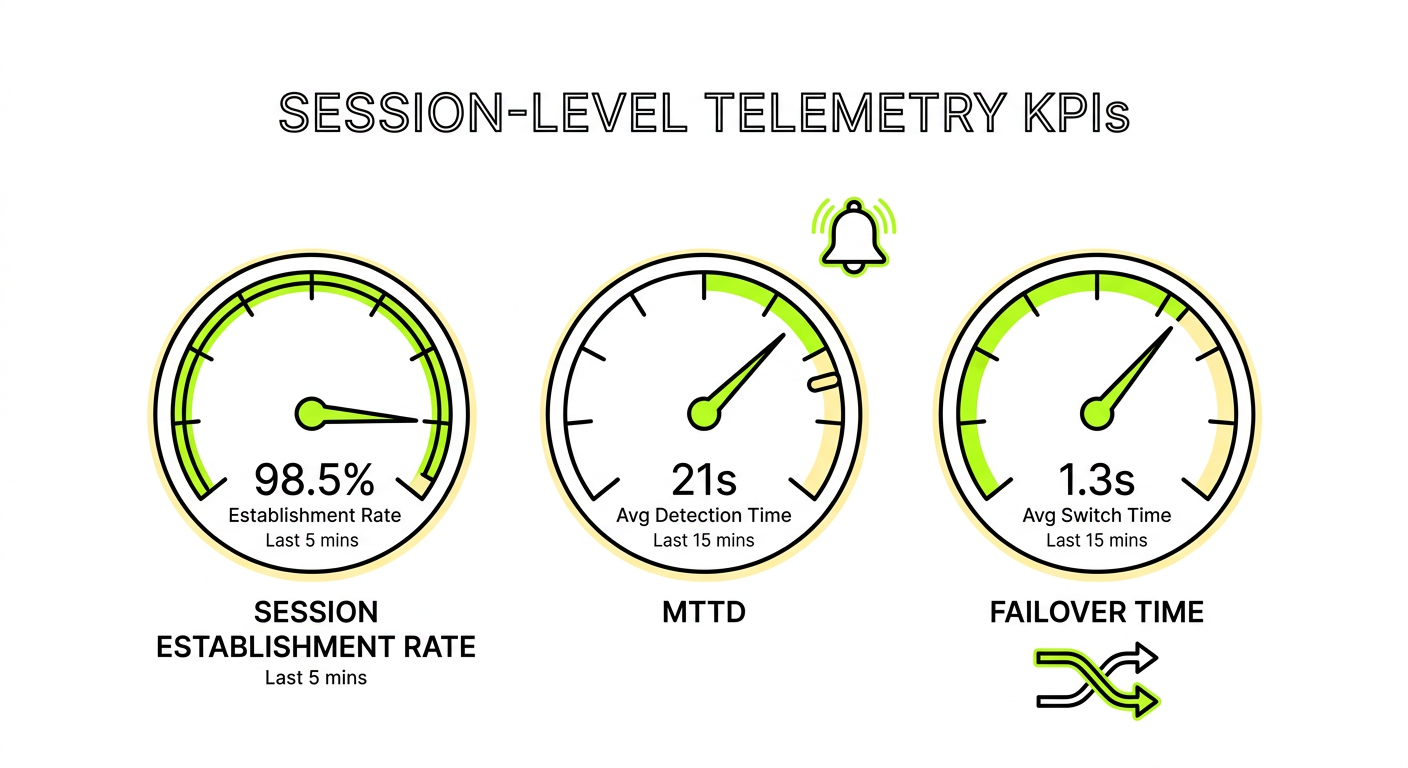

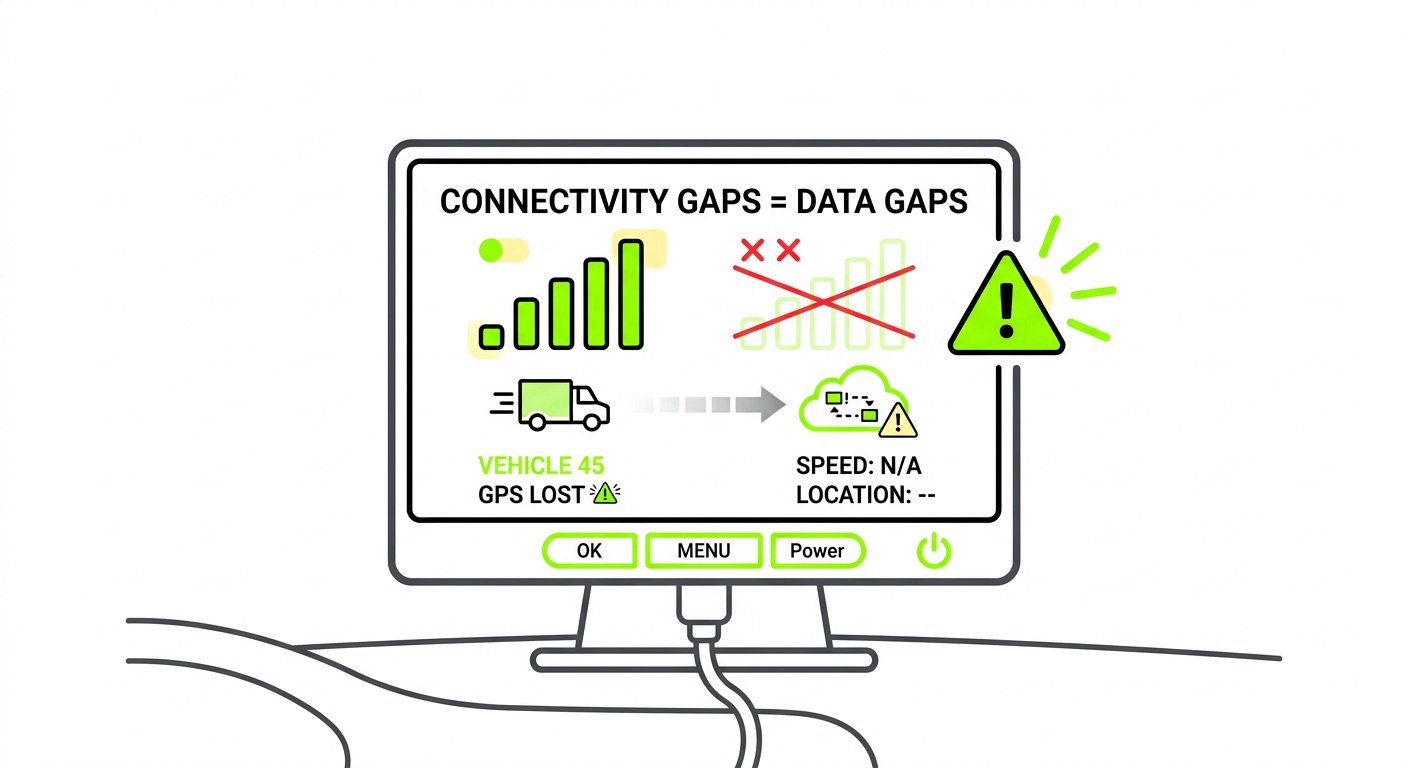

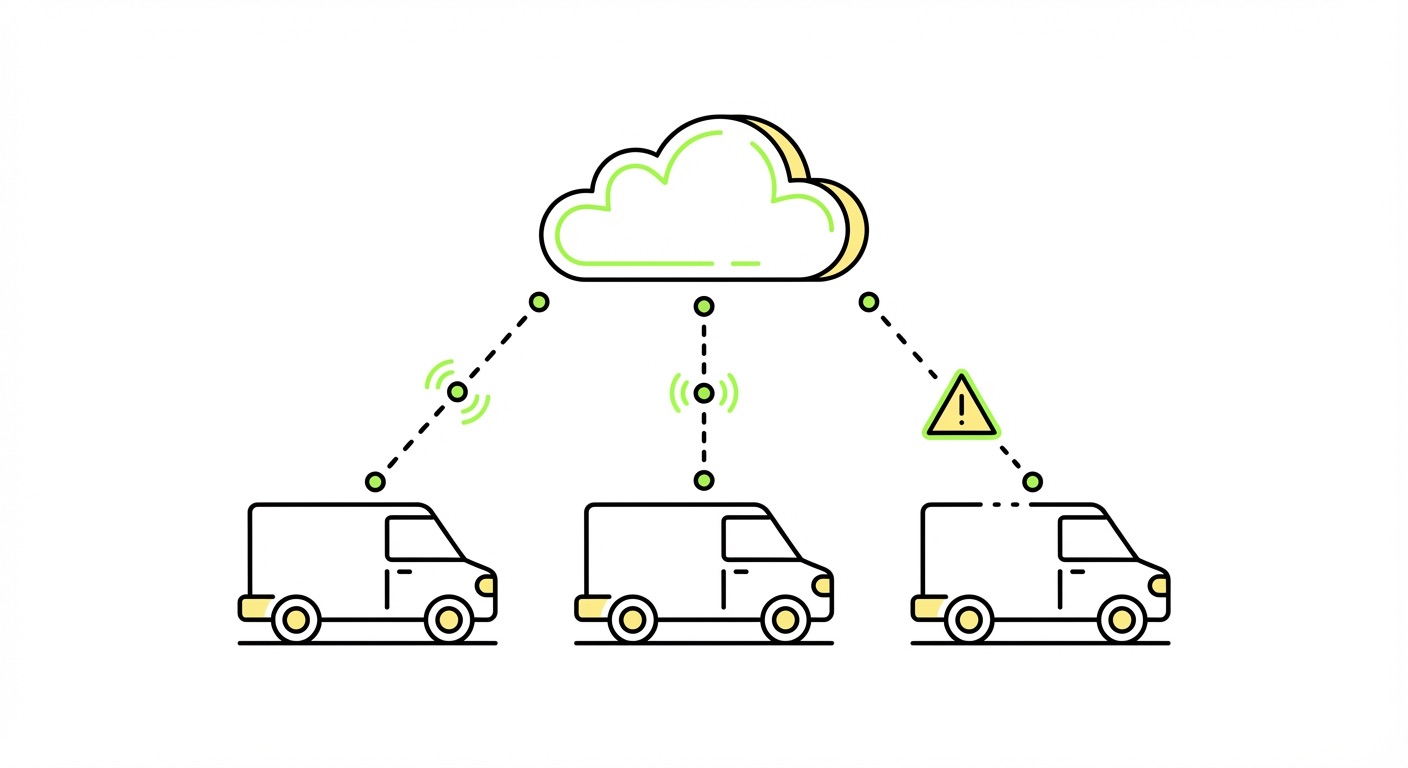

The operational moat in fleet connectivity isn't coverage – it's visibility and control at the session level. Monthly usage summaries tell you what happened. Real-time session telemetry tells you when a session dropped, which PLMN a device was on when it failed, and whether the issue is systematic or isolated. Those are very different problems with very different responses.

Primary uptime metrics worth tracking:

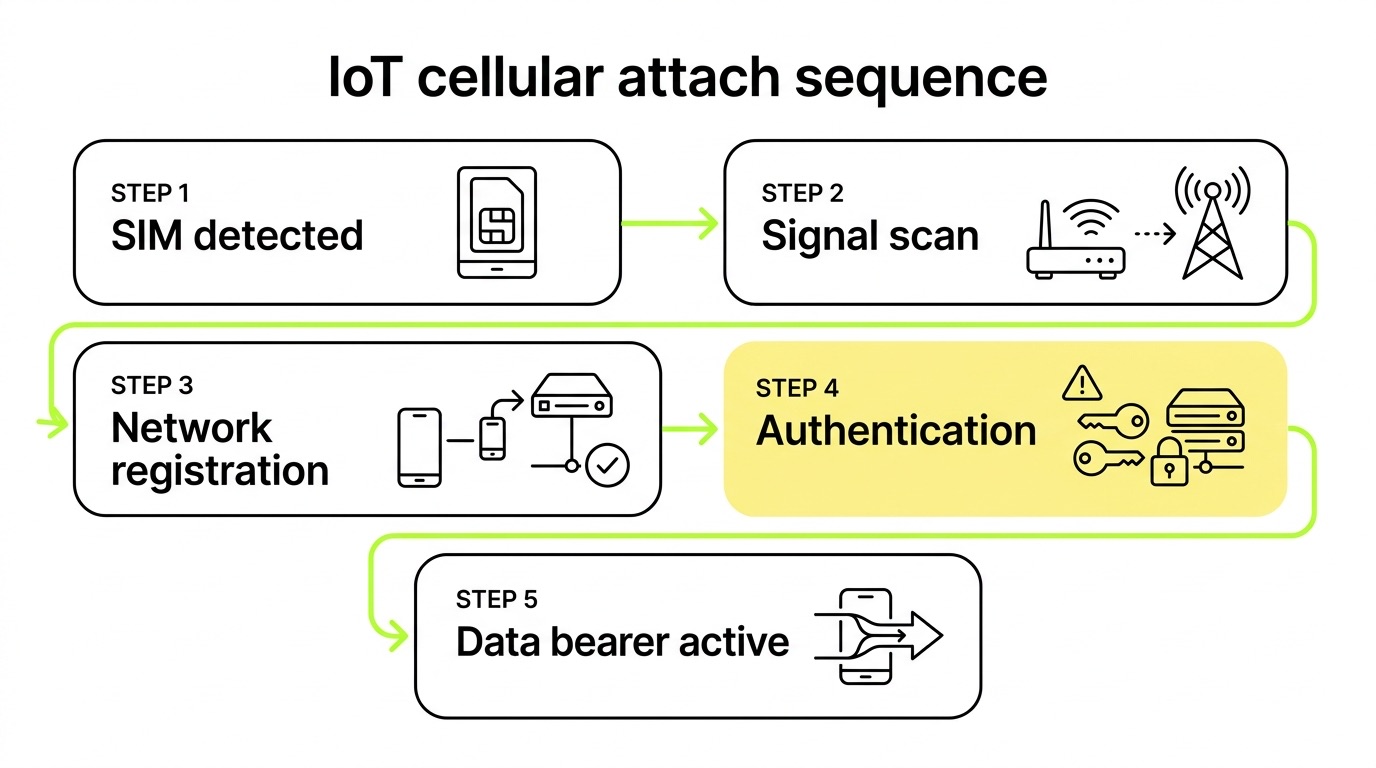

- Data session establishment rate: Network registration does not equal data connectivity. Track PDP context activation success as your primary uptime signal. High-performing deployments target >99.5% session establishment rates.

- Mean time to detect (MTTD) connectivity loss: Under five minutes via automated alerting. If you're finding out about fleet outages from end customers, your monitoring architecture needs redesigning.

- Automatic failover time: Multi-network SIMs should switch to an alternative network within seconds during a primary operator outage. Benchmark this in pre-deployment testing, not after go-live.

Anomaly detection is where the proactive work happens. Flag devices retrying attach or PDP context at abnormal frequencies – hundreds of attempts per hour – before they trigger operator-level throttling or suspension. Track APN success rates by region and profile to catch configuration drift as you expand into new markets. High network reselection frequency in mobile fleets indicates sticky network behavior or poor mobility handling that needs firmware attention.

Forward-looking metrics often get deprioritized until they become crises. Network sunset tracking by module cohort turns a reactive emergency into a planned migration: know which devices are running 2G/3G-only modules in markets with announced sunset dates, and act before the shutdown. Bootstrap-to-operational profile activation time benchmarks OTA provisioning performance under varied conditions – slow profile downloads during initial deployment create disproportionate support load that compounds quickly.

The operators who handle large fleets without losing their minds are the ones who've built automated alerting and workflow triggers around these metrics before they need them. The experience of managing 300,000 SIMs across multiple providers makes the value of that early investment concrete.

Frequently asked questions

What's the difference between an orchestration layer and a connectivity management platform?

An orchestration layer routes commands between systems. A connectivity management platform provides session-level telemetry, SIM state management, billing, and automated alerting – the operational depth needed to actually run a fleet. Orchestration without session-level visibility is, functionally, a dashboard with no diagnostic power. The distinction matters when you're trying to understand why 3% of your fleet stopped transmitting at 2am. Our analysis of orchestration layers vs. holistic connectivity management platforms goes deeper on this.

When should I use eSIM vs. physical SIM for a fleet deployment?

eSIM is the right choice for any fleet that operates across multiple countries, has a deployment lifetime exceeding three years, or requires the ability to change operators without physical access to devices. Physical SIMs remain appropriate for single-market, shorter-lifecycle deployments where simplicity is the priority. For most OEM fleets with global ambitions, eSIM is the default – not the premium option.

How do I prevent connectivity issues from compounding as I scale?

Issues that affect 0.1% of a 10,000-device fleet are manageable manually. The same rate at 500,000 devices is a full-time crisis. The answer is building automated detection and response into your connectivity management platform before you need it – not after. Automated SIM state changes, alerting thresholds, and workflow triggers need to be configured and tested at small scale so they're mature by the time you hit production volumes. The SIM/eSIM troubleshooting checklist is a useful baseline for what your team should be able to resolve without escalating.

Key takeaways

- Architecture before deployment: The decisions that determine fleet reliability – module selection, radio technology, APN validation, firmware retry logic, SIM type – cannot be corrected at scale without significant cost. Make them deliberately in pre-production.

- eSIM is an operational architecture, not just a logistics convenience: Profile portability, multi-network access, and OTA carrier switching only deliver value when your connectivity management platform can track and manage the resulting state changes.

- LTE-M is mandatory for moving assets: NB-IoT lacks cell handover. For any mobile fleet application, this is a disqualifier, not a tradeoff.

- Session-level telemetry is non-negotiable: Monthly summaries tell you what happened. Real-time session data tells you what's happening. Fleet management at scale requires the latter.

- Multi-network SIMs with automatic failover are a reliability architecture: Single-carrier SIM dependency is a designed-in single point of failure. Eliminate it by design, not as a retrofit.

If you're designing connectivity for a fleet that needs to operate reliably across borders and at scale, explore 1oT's fleet management connectivity or review global multi-network coverage to see how automatic network switching works in practice. For OEMs evaluating eSIM infrastructure for their devices, the IoT eSIM platform is a practical starting point.

.avif)

.avif)